Though it is a few years old, I have just read the European Union’s Artificial Intelligence Act. You will forgive me for not having been aware of it before now: Britain is no longer a member state, and so these are the laws now of a foreign power. The Act is presented as a landmark in responsible governance. According to the European Parliament, it is the world’s first comprehensive attempt to regulate artificial intelligence in line with “fundamental rights” and “European values.” The Act claims to draw clear moral boundaries, banning some uses of AI outright, imposing strict conditions on others, and requiring transparency wherever machines may influence human judgement.

This is the official story. It is also largely false. The AI Act will not prevent governments from using artificial intelligence for oppressive purposes. It cannot do so, because it is written by governments, enforced by governments, and riddled with the same exemptions, ambiguities, and moral escape hatches that characterise every other modern censorship regime. What it will do is slow the development and adoption of AI by private actors, and create a universal pretext for the regulation of speech itself.

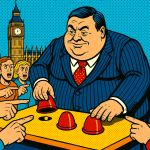

The Act is not a restraint on power. It is an instrument for reallocating power.

The centrepiece of the AI Act is its list of prohibited practices. Certain uses of AI are said to pose an “unacceptable risk” and are therefore banned. These include, most notably, “AI systems used for social scoring by public authorities” and systems that “manipulate human behaviour to circumvent users’ free will.”

The language is deliberately theatrical. It evokes a crude dystopia in which governments openly assign numerical virtue scores to citizens and deploy mind-control machines against the population. Such systems are easy to condemn because they do not exist in this form. Real governments do not operate like this. States already classify citizens by behaviour. They already assign risk profiles. They already decide who is trustworthy, who is suspicious, who deserves assistance, and who should be watched. They do this through welfare systems, policing thresholds, border controls, regulatory enforcement, and informal data sharing between agencies. None of this requires a commercial AI product labelled “social scoring.” None of it is prevented by the AI Act.

A government that wishes to automate these processes using machine learning will simply refuse the forbidden label. It will describe its system as decision support, as resource allocation, as safeguarding, as threat assessment. If challenged, it will invoke public safety, national security, or the protection of vulnerable groups. The Act itself explicitly allows exceptions for law enforcement and serious crime. The boundary between exception and norm is therefore left entirely to administrative discretion.

This is not a loophole. It is the operating principle of the modern state. We have seen this pattern repeatedly in the field of censorship. Laws introduced to combat terrorism, hate, or misinformation are steadily reinterpreted to suppress lawful dissent. Definitions expand. Thresholds fall. Exceptional powers become permanent. The AI Act assumes that this will not happen again. That assumption is either naïve or dishonest.

The Act’s defenders emphasise its “risk-based” approach. AI systems are divided into unacceptable risk, high risk, limited risk, and minimal risk. This is presented as a neutral, technical framework. It is neither neutral nor technical. An AI system is deemed “high risk” when it operates in areas such as employment, education, creditworthiness, policing, migration, or healthcare. These are not areas chosen because AI performs badly in them. They are chosen because they are areas in which the state already claims moral authority and resists disruption.

Developers of high-risk systems must ensure “appropriate human oversight,” maintain extensive technical documentation, mitigate bias, log decisions, and register their systems in an EU database. These requirements are not designed to improve outcomes. They are designed to slow entry by raising costs, and to ensure that innovation proceeds only with bureaucratic permission.

The state does not subject itself to comparable scrutiny. It cannot. It writes the rules. Internal systems are quietly reclassified. Exemptions are granted. Oversight remains internal. The burden of proof runs in one direction only. Private actors must prove innocence. Public actors assume virtue.

The most dangerous provisions of the AI Act concern transparency. The Act requires that users be informed when they are interacting with an AI system. It requires that “AI-generated content” be disclosed, including deepfakes, unless their artificial nature is obvious. This is justified on the grounds that citizens have a right to know. In practice, this creates an entirely new category of suspect speech. As artificial intelligence becomes embedded in writing, editing, translation, summarisation, and research, the distinction between “organic” and artificial output becomes meaningless. A sentence drafted with AI assistance but revised by judgement is no less human for that fact. A text written without assistance but reproducing official clichés is no more authentic. The Act ignores this reality because acknowledging it would collapse the regulatory distinction on which the law depends.

Mandatory disclosure does not clarify speech. It classifies speakers. Once AI-assisted expression is formally marked, all expression becomes regulable. If a text is politically inconvenient, suspicion can be raised that it was machine-assisted. If suspicion exists, disclosure can be demanded. If disclosure is judged inadequate, sanctions follow. The content itself need never be addressed. The tool becomes the crime.

This is precisely how censorship already operates. AI transparency simply provides a new justification. Enforcement will not be even. It never is. The citizen will be legible. The state will remain opaque. The AI Act assumes good faith on the part of governments. This assumption is fatal. States evade constraints as a matter of routine. They reclassify. They redefine. They plead necessity. They lie. They do this not because they are uniquely wicked, but because power behaves this way when it is not constrained by competition or exit.

A government accused of violating the AI Act will not concede the point. It will argue that its system is not AI within the meaning of the law. It will claim that decisions are advisory rather than automated. It will invoke emergency conditions. It will cite national security. It will insist that safeguards exist, even when those safeguards are internal and unverifiable.

Private developers cannot do this. Citizens cannot do this. Only the state can. The AI Act therefore does not constrain oppression. It monopolises it.

The Act’s treatment of “general-purpose AI” and “foundation models” reveals its deeper anxiety. Here the concern is not specific harm, but capacity. Large models are treated as dangerous because they are adaptable, because they escape easy supervision, because they cannot be confined to a single approved purpose. The Act speaks of “systemic risk,” borrowing language from financial regulation. This is revealing. The fear is not that AI will do something harmful, but that it will do something unexpected without permission. This is the logic of bureaucratic power. Intelligence that does not belong to an institution is suspect by definition.

While Europe regulates, others experiment. In the United States, artificial intelligence will develop through competition and consumer demand. It will be uneven and wasteful. It will move quickly. In China, development will proceed under state direction, with little interest in European moral language. It will be strategic and unapologetic. Europe will do neither. It will refine definitions, update guidance, and expand compliance offices. It will then import the resulting technologies from abroad, subject to licensing conditions it did not negotiate from a position of strength.

Britain is unlikely to escape this outcome. Despite Brexit, British regulators speak the same dialect. They use the same vocabulary of trust and alignment. Alignment with Brussels will be presented as pragmatism. It will amount to intellectual dependence.

The deepest defect of the AI Act is not ideological. It is epistemic. We do not yet know what artificial intelligence will become. We do not know which applications will matter, which will fail, which will cause harm, and which will quietly improve ordinary life. The Act behaves as though these questions have already been answered by committees.

A serious civilisation would have allowed the technology to develop and observed how people actually used it. Then it should have intervened where concrete harms appeared. Europe has chosen the opposite course. It has legislated first and postponed understanding.

Artificial intelligence will be used for control. Of that there is no doubt. The EU AI Act will not prevent this. It will merely ensure that such uses are monopolised by the state and insulated from challenge by regulatory formality. Europe has mistaken regulation for wisdom. It has done so before. The consequences will be familiar.

Discover more from The Libertarian Alliance

Subscribe to get the latest posts sent to your email.